The received wisdom about founder-led technical builds is that they break down past a certain threshold of complexity. A solo founder can prototype an MVP. They can't build a serious B2B platform without a development team - the complexity exceeds their cognitive bandwidth, the scope exceeds their time, the quality requirements exceed their skill. At some point they need engineers, designers, product managers, QA, a CTO. That's the path. Alternatives are for toy projects.

This wisdom is out of date, and worth examining carefully because the alternative - a founder working directly with AI tools like Claude and Claude Code - can in some cases out-execute a traditional team on exactly the dimensions where the traditional team is supposed to have the advantage.

The reason is not lower labor cost. That's the shallow version of the argument. The real reason has to do with translation fidelity.

In a traditional build, product intent flows through a series of translation steps. The founder has a mental model of what the product should be. That mental model gets translated into a product requirement document, which is a lossy compression of the mental model. The PRD gets translated into a feature specification, which is a lossy compression of the PRD. The spec gets translated into engineering tickets, which further compress it. The tickets get translated into code by engineers who have their own mental models of how things should work, which introduces interpretation variance. The code gets reviewed by other engineers and QA, each applying their own lens. The final product is several translations removed from the founder's original mental model, and at each translation step, fidelity degrades.

This degradation is not a failure of professionalism. It's what happens when you move complex ideas between humans. Natural language is lossy. Specifications can't capture everything. Different people have different frames. Questions get asked and answered imperfectly. Misunderstandings compound.

In the traditional build, the founder's response to this degradation is to spend time managing the translation process - reviewing specs, answering questions, correcting misinterpretations, iterating on implementations. This is a large share of a founder's time in any traditional build, and it doesn't go away by hiring smarter people. It only goes away by eliminating translation steps.

Working directly with Claude and Claude Code eliminates almost all of the translation steps. The founder specifies the product in natural language, with as much context as the receiving session can hold. The AI produces code, documents, specifications, whatever is needed. The founder iterates directly. There are no translation steps between founder and output, because there are no humans between founder and output. The mental model in the founder's head makes it to the artifact with minimal degradation.

This is not the same as saying the AI is smarter than a team of engineers. In many specific technical skills, it isn't. But for the category of tasks that dominate early-stage B2B product development - thinking through data models, writing application code from clear specifications, generating documents and decks, exploring design alternatives, building prototypes - the AI's performance is at or above the level of a competent individual engineer, and the iteration speed is dramatically faster because the translation latency is gone.

The founder's edge, in this configuration, is translation fidelity. Complex product intent survives intact from mental model to code because there are no intermediate humans to lose it through.

This edge has a specific cost that has to be managed, and naming it is important.

The cost is context integrity across sessions. An AI session is stateless in a way that a human team is not. The human team accumulates shared context over time - they remember decisions from six months ago, they have intuitions about the product that they built through long exposure. An AI session starts fresh each time. If you don't give it the context it needs, it will produce output that's locally reasonable but globally inconsistent. The platform starts to diverge from itself - this module uses one data model, that module uses a different one, the third contradicts the first two.

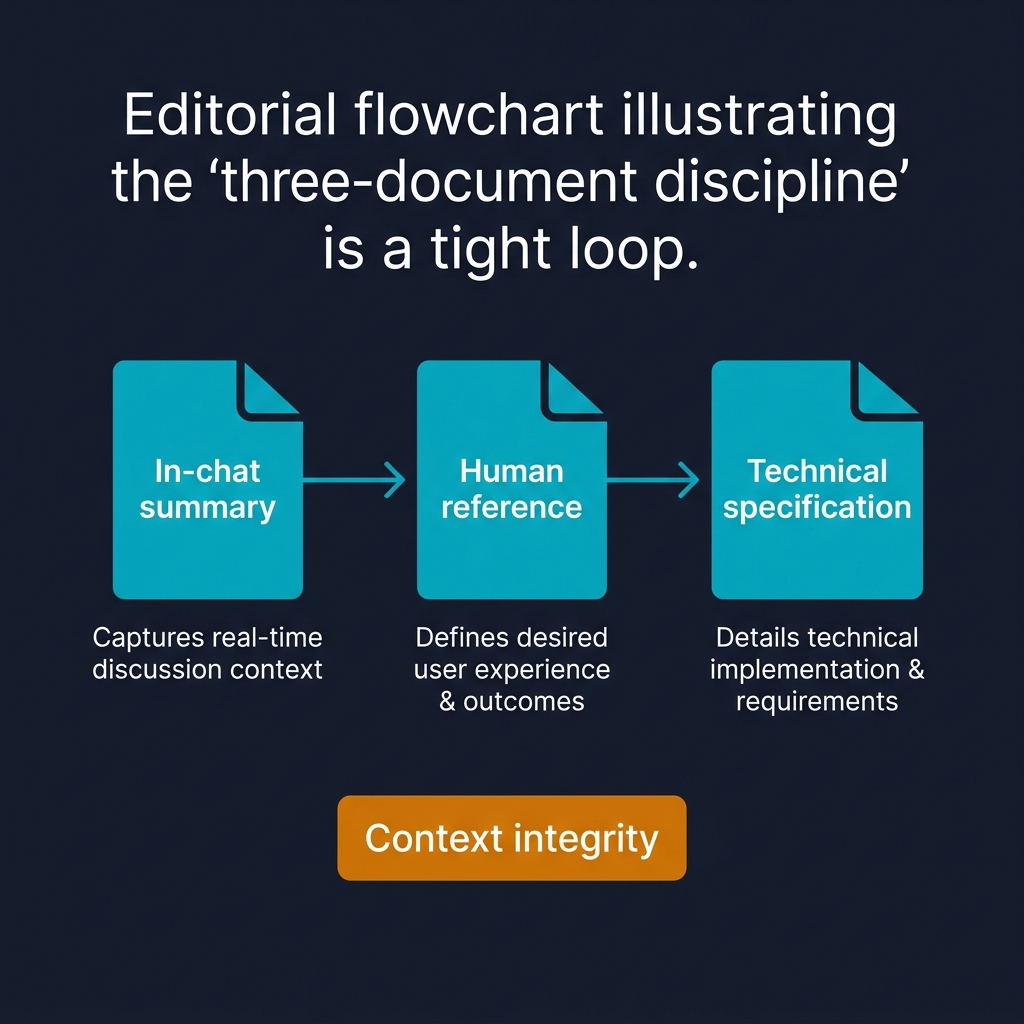

The fix for this is documentation discipline. Every session produces artifacts that carry the context forward. I've come to think of this as a three-document discipline, and the sequence matters.

Step one: the in-chat summary. At the end of every significant session, the output is an in-chat summary under 1,500 words. Human-readable. Covers what was decided, what was built, what remains open. The founder reviews and signs off in the chat before anything else is produced. This is the confirmation step - if the summary doesn't capture what the founder meant, the session didn't produce what the founder thought it did, and the correction happens here before it propagates.

Step two: the human reference document. A longer, formatted document capturing the locked decisions and open items for the module or container. Reviewable, referenceable, the authoritative narrative for the work. This document is what the founder reads when they return to the work days or weeks later. It's what they share with anyone else who needs to understand the module.

Step three: the technical specification. The complete technical detail - field names, data types, event schemas, calculation logic, API contracts. Zero translation loss. This is what Claude Code receives when implementation starts. The technical specification is derived from the human reference document, which was derived from the in-chat summary, which was derived from the session's actual work. Each step preserves fidelity forward.

No implementation begins until the technical specification exists. No technical specification is written until the human reference document is signed off. No human reference document is written until the in-chat summary is confirmed. The discipline is sequential and non-negotiable.

With this discipline, the context survives across sessions. A new Claude Code session working on the module reads the technical specification, understands what's being built, and produces code that's consistent with what came before. A new planning session reads the human reference document, understands the module's state, and proposes the next increment without needing to re-derive the context.

The discipline tax is real. Writing three documents per module is slower than writing none. The payoff is that the module still makes sense when you come back to it in three months - and the AI sessions working on related modules produce work that integrates cleanly.

Without the discipline, the founder's translation advantage is squandered on rebuilding context every session. With it, the founder's translation advantage compounds - each session starts from the crystallized state of the last, and momentum accumulates.

There is one more observation about this way of working that I think matters.

The founder-with-AI configuration is not a cost optimization. If it were, it would be an inferior version of the traditional team configuration, defensible only because it's cheaper. The founder-with-AI configuration is an alternative shape of cognition - one in which complex product intent survives from mental model to artifact with fewer translation losses than is achievable through any human-team structure at the founder's disposal.

For certain kinds of products, this shape is objectively better. Products that depend on a coherent, opinionated point of view - strategy software, analytical platforms, design-forward consumer products - benefit from undiluted founder vision. Products that are primarily execution at scale - infrastructure, marketplaces, operations-heavy platforms - probably don't. The trade-off depends on what kind of product you're building.

Complex strategic products benefit most, because the complexity survives intact. The thing the founder saw in their head is what reaches the user, not a compromised version processed through five rounds of team interpretation. That intact-ness - that the product carries the founder's specific, non-generic view of what the product should be - is what makes it feel distinctive to users. Most B2B software feels generic because it is generic. It's been through too many translation steps, each one smoothing away the distinctive edges.

The founder-with-AI configuration preserves the edges. That's the edge.

It's not for everyone, and it's not for every product. But for founders building strategically complex products with a clear point of view, it is - right now, in this particular window of AI capability - the highest-leverage configuration available. The translation advantage is real. The discipline tax is modest. The output quality is remarkable.

The tribe of people building this way is small and growing. It's worth watching what they produce.

I want to close with a more critical look at this configuration, because the enthusiastic version of the argument obscures real limitations that anyone considering it should understand.

The founder-with-AI configuration has specific failure modes, and they're worth naming.

The first failure mode is scope delusion. Because the founder can produce output quickly across many domains - code, design, strategy, documentation - they can overestimate what they can sustain across those domains. A founder can build a working prototype of five modules in a month. They cannot maintain five modules simultaneously at production quality over multiple years. At some point the scope exceeds what any single person can hold in their head, and the absence of team specialization becomes a liability rather than an asset.

The discipline that mitigates scope delusion is ruthless focus on what's shippable. Many things can be built; fewer can be shipped to paying customers with the quality and support required. Founders who recognize this and either bring in help for the shipping work, or intentionally limit the customer base until they can, survive the transition. Those who assume they can scale the builder-founder model indefinitely hit a wall.

The second failure mode is depth degradation. AI tools are good at breadth - they can work plausibly across many domains. They're less good at depth in specific domains, particularly when the depth requires years of specific experience that can't be compressed into a session's context. A skilled database engineer who has debugged production databases at scale for a decade has pattern recognition that an AI session doesn't have, and can't acquire within the session. The founder working with AI on database architecture gets plausible outputs that may or may not hold up under production conditions - and may not have the experience themselves to know the difference.

The mitigation is selective human expertise at depth points. Not a full team, but a senior database engineer on call for specific decisions, a senior security reviewer for critical paths, a specialist consultant for domain questions the founder knows they can't judge. The configuration is founder-plus-AI-plus-deep-experts, not founder-plus-AI-alone. Getting this right requires the founder to be honest about where their own judgment is reliable and where it isn't, which is often the hardest call to make.

The third failure mode is compounding error in extended interactions. Across long sessions and many iterations, AI output drifts. The drift is usually subtle - a field name changes slightly, a convention gets inconsistent, an architectural decision gets contradicted. Individually none of these matters. Collectively they accumulate into a codebase or document set that's internally inconsistent in ways that take significant work to untangle.

The three-document discipline mitigates this substantially, but it doesn't eliminate it. The founder has to maintain ongoing vigilance about consistency - periodically reviewing the full state of the system, catching drift before it compounds, re-grounding sessions that have wandered. This is meaningful work. It's not the glamorous part of the job. It's the part that prevents the accumulated drift from becoming unmanageable over twelve to eighteen months of building.

The fourth failure mode is single-point-of-failure risk. A traditional team has redundancy - if one engineer gets sick or leaves, the work continues. The founder-with-AI configuration doesn't. If the founder becomes unavailable, nothing ships, because all the context is in the founder's head plus the documented artifacts. Documentation helps but doesn't reproduce judgment.

The only real mitigation is to eventually bring in at least one additional senior person who can carry the context if needed. Most founders resist this because it feels premature. The answer is that the risk grows in absolute magnitude as the business grows in value, and at some point exceeds what any rational founder would accept. That point is earlier than most founders recognize. Bringing in the second person while the context is still transferable is dramatically easier than doing it after.

Despite these failure modes, the configuration is still right for a specific set of contexts, and I want to be clear about what those are.

The configuration works well for products where the founder has deep domain expertise and clear strategic vision - they know the market, they know what the product should be, and their biggest challenge is converting vision into artifact. For these founders, AI tools compress the vision-to-artifact path dramatically.

It works less well for products where the founder is exploring - they don't yet know what the product should be and are using early builds to discover it. In exploratory contexts, the feedback loops that traditional teams provide (pushback, alternative ideas, reality-testing) are more valuable than the translation-fidelity advantage of working directly with AI. The founder-with-AI risks building the wrong thing faster, which is not an improvement.

It works well for products with complex but well-specified logic - strategy software, analytical platforms, decision-support tools. It works less well for products where the critical challenges are operational scale and reliability - payment infrastructure, high-availability systems, safety-critical applications. Different failure modes dominate in these categories, and the traditional-team configuration is better suited to them.

The founder-with-AI configuration is, in short, a good fit for a specific kind of product built by a specific kind of founder at a specific stage. It's not a replacement for the traditional team across all contexts. Claims that it is tend to come from founders who are early in the build and haven't yet hit the scope or depth limits that bite later. Pay attention to what founders who have been at this for two or three years say about how their configuration has evolved. The honest answer is usually that they've retained the AI-first approach for some things and brought in targeted human expertise for others. Pure solo-plus-AI, sustained indefinitely, is rare.

The configuration, with all its limits, is still a significant capability shift. Five years ago, a solo founder building a serious B2B platform without a team was mostly impractical. Three years ago, it was possible for narrow domains. Now it's practical for a meaningful range of products. The capability curve is steep and still rising. Founders who are willing to learn the new discipline - the three-document approach, the selective human expertise, the vigilance against drift - can build things that the previous generation couldn't have built solo.

What they produce will look different from what traditional teams produce. Sometimes that difference is a strength and sometimes it's a weakness. The verdict on which dominates is still being written. The configuration is worth taking seriously, with the limits acknowledged.

The edge is real. So are the constraints. Both matter.

---

End of collection.