Every major consulting firm has a filing cabinet full of route-to-market recommendations that were adopted on paper and never implemented in the field. Some of them are excellent. The diagnosis is correct. The prescriptions are sound. The segmentation of outlets is defensible. The recommended distributor footprint is economically rational. The DSR sizing is grounded in genuine field research. And yet eighteen months later, none of it has been implemented, and the client is back at the same diagnostic stage with a different firm. This is not because the client was lazy or the consultant was wrong. It is because there is no architectural bridge between a recommendation and a live operating configuration, and the human effort required to build that bridge from scratch is always larger than the organisation has bandwidth to invest.

The standard consulting failure mode

A typical RTM engagement goes like this. A senior partner is brought in to diagnose why a brand's commercial performance in a region is below peer benchmarks. A team of three to five consultants spends eight to twelve weeks conducting distributor interviews, outlet audits, competitor analysis, and financial modelling. They produce a final deck with a clear story: the outlet universe has been incorrectly segmented, the distributor footprint has two overlaps and three gaps, the DSR incentive structure is rewarding the wrong behaviour, and the SKU portfolio should be rationalised by 30%. The client's commercial leadership reviews the findings, agrees with most of them, and asks for an implementation plan. The plan comes back in a second deck, with a timeline, a budget, and a recommended programme management structure.

The second deck is where it dies. The recommendations are correct, but they require the client's commercial team to simultaneously reconfigure their sales planning system, their distributor management processes, their territory definitions, their DSR deployment, and their trade spend allocation - all without a system that can absorb those changes as inputs. Each recommendation becomes its own project. Each project requires a sponsor, a budget, a timeline, and a change-management exercise. The organisation cannot run seventeen projects simultaneously. So they pick the three easiest and drop the rest. Eighteen months later, the diagnostic findings are still relevant, mostly unimplemented, and the deck sits in a filing cabinet while the commercial performance continues to drift.

Bain got PETRONAS right. PETRONAS had no system to execute.

The specific inspiration for this architecture came from watching this pattern play out at a large Asia-Pacific lubricants brand. The consulting firm's diagnosis was strong. The distributor viability analysis was rigorous. The territory redesign was defensible. The SKU-channel fit matrix was well-constructed. The recommendations, if implemented, would have added measurable revenue and reduced working capital commitment across the network. They were not implemented - not because the brand disagreed with them, but because there was no operating system that could absorb the recommendations and turn them into live configurations for the commercial team to operate. The deck was filed. The work was done. The outcome was no different than if the engagement had never happened.

This is not an indictment of consulting firms. It is a structural observation about the gap between diagnostic work and operational execution. Consulting firms produce documents because documents are what they sell. Operational execution requires systems, and systems are built by different firms with different economics. The gap between the document and the system is where value goes to die.

The architectural fix - the diagnosis IS the configuration

The architectural fix is to collapse the gap. An RTM Assessment should not produce a document. It should produce a live configuration of the operating system that the client is about to use. When the assessment recommends that an outlet tier should have a minimum basket value of MYR 1,200 to qualify as Elite, that number becomes the tier threshold in the live system. When the assessment recommends that the distributor hurdle rate for this market is 14% - composed of the market weighted-average cost of capital plus a 4% risk premium for the category - that number becomes the hurdle rate in the distributor P&L engine. When the assessment recommends a specific DSR-to-outlet ratio for a specific tier mix, that ratio becomes the capacity check in the territory design module.

The assessment and the configuration are the same artifact. The client does not receive a deck. They receive a login. Their first view of the platform shows distance from diagnostic baseline - every recommended metric, with its current actual value, with a traffic light against the baseline, with a drill-down into the underlying data. The implementation plan is not a separate document. It is the set of actions the platform has already surfaced, ranked by impact, with owners and deadlines assigned during the workshop that closed the assessment.

The handshake layer

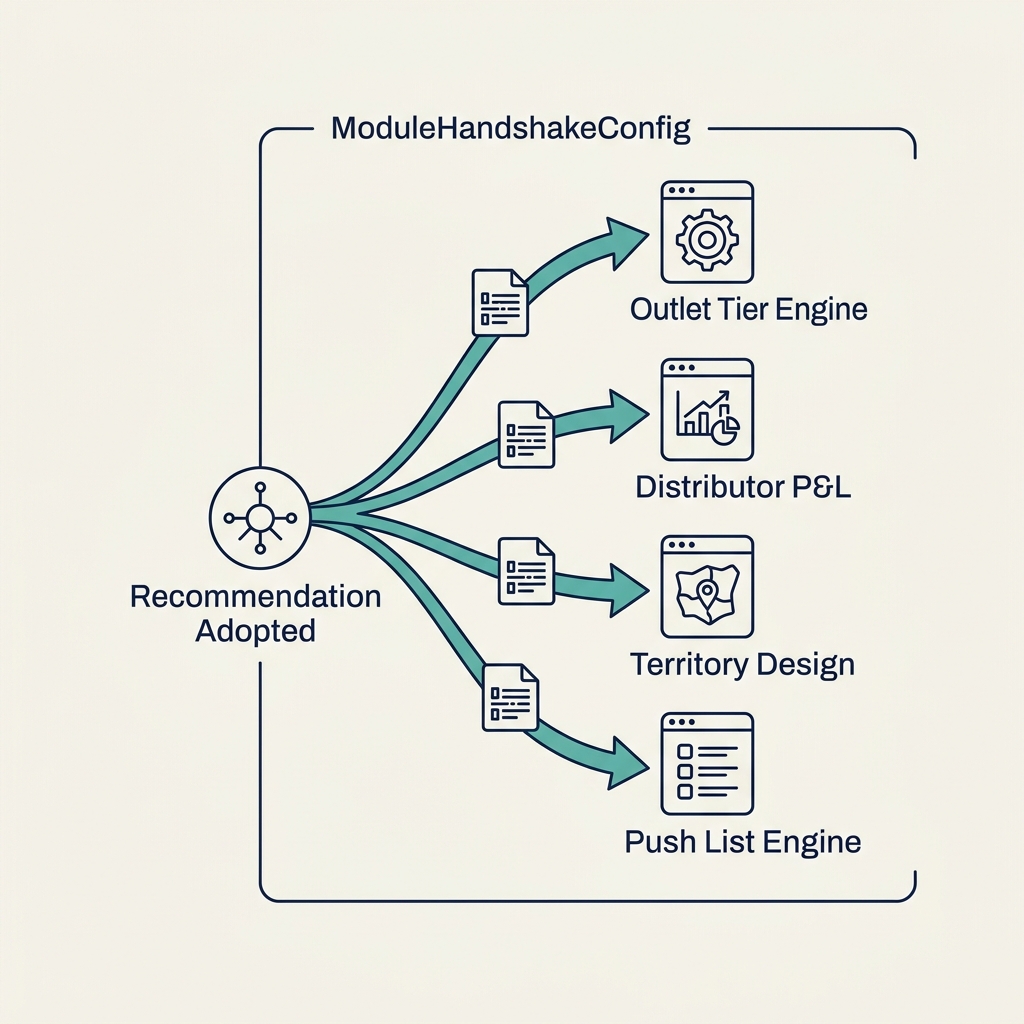

Technically, this works through what the architecture calls a handshake - a structured layer that captures, for every recommendation adopted during the assessment, the parameters that produced it, the logic that generated it, and the downstream module configurations that depend on it. When the recommendation is adopted, the handshake fires. The tier thresholds propagate to the outlet segmentation engine. The hurdle rates propagate to the distributor scoring. The territory definitions propagate to the routing engine. The SKU postures propagate to the push list engine. Every adopted recommendation seeds a specific, traceable configuration change in a specific, downstream module.

The traceability is the second-order win. When a Regional MD logs into the platform six months after go-live and asks "why is this SKU's push priority so high in the modern trade channel," the platform can answer: "Because the RTM Assessment in February identified this SKU as having an 88 eCommerce channel fit score and a managed-parallel posture, which raised its offline push priority in non-eCommerce-fulfilment channels by 15 points, adopted in the April handshake, executed in the May release." Every configuration has a lineage back to a decision. Every decision has a lineage back to the evidence that produced it. The platform is not a black box. It is a cumulative record of commercial decision-making, with the underlying logic fully exposed.

The $30K engagement that writes its own proposal

This is why the assessment is priced the way it is. A traditional consulting RTM engagement costs between $500K and $3M depending on scope and firm. The final deliverable is a PowerPoint. The $30K assessment produces a configured operating system, a board-ready RTM review PDF, and an implementation pathway that is already running. It is not a smaller version of the consulting engagement. It is a different product. The consulting firms cannot compete with it at that price point because their cost base is consultants, not software. The software competitors cannot compete with it at that depth because their platforms start from a blank dashboard, not a diagnostic baseline.

The economic consequence is a commercial motion where the assessment is the entry wedge, the platform is the expansion, and the subscription is the long-term relationship. The client who buys the assessment is already on the platform. The platform already holds their diagnostic baseline. Expanding to Module 2 or Module 3 is not a new sale - it is an upgrade path the client has already started. This is why the diagnosis-as-configuration architecture is strategically important. It is not just a better product. It is a different business model, one where the act of selling the first engagement creates the dependency that makes the expansion inevitable.