A friend recently asked me, during a lull in a build session, how hard it would be for someone to replicate the platform we were building. I gave him the honest answer: easier than you'd like, harder than starting from scratch. Anyone with valid credentials and a network inspector can map the API surface in an afternoon. The frontend bundle is public - run any decent reverse-engineering tool on the Cloudflare-served JavaScript and significant portions of the React component logic become readable. The routes are there, the response shapes are there, and the formula outputs go in and come out in predictable patterns. A determined engineer with three months of weekends could build something that looks the same from the outside.

That's the honest part. Here's the less obvious part: it wouldn't matter.

The code is a delivery mechanism. The actual moat is elsewhere, and understanding where it lives is the difference between building a product that gets commoditised and building one that compounds.

The three layers of the moat

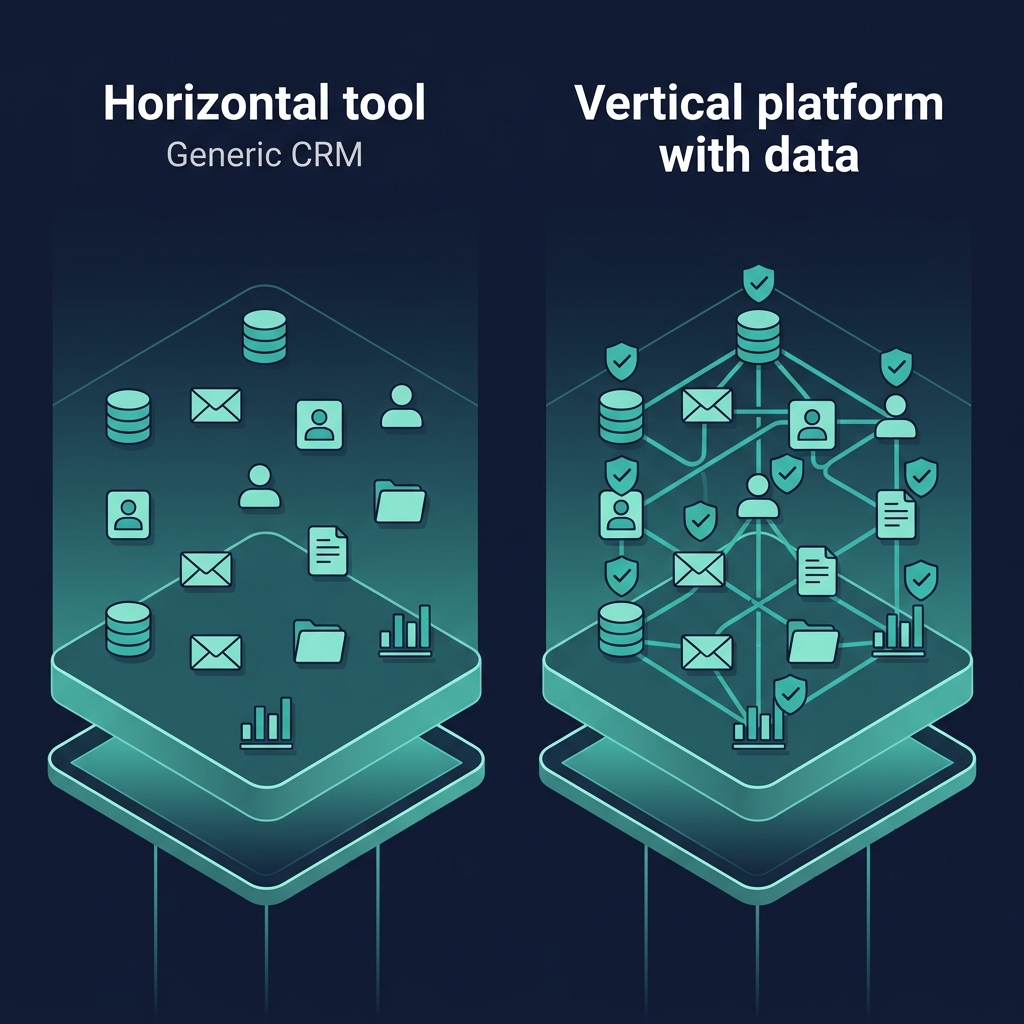

The first layer is methodology. A vertical platform like this encodes a specific way of thinking about a problem - in our case, how to assess whether a distributor is viable, how to design a territory that doesn't double-count districts, how to calculate a defensible DSR count that survives a CFO's scrutiny, how to cascade confidence through a chain of formulas so that every number carries its own epistemic weight. None of that is in Salesforce. A client could run Salesforce and still have no idea how to answer any of those questions. Salesforce is a horizontal CRM - it can do anything, which means it does any specific thing poorly. The vertical thesis is that methodology, well-encoded, is worth more than generality. That's the first layer.

The second layer is the handshake between modules. Module 1 produces a set of outputs - territory boundaries, distributor P&L baselines, DSR counts, viability verdicts, outlet universe counts, archetype classifications - and Module 2 consumes them as its starting point. Without Module 1, Module 2 is just another CRM with manually entered targets. With Module 1, it's a workspace where the strategic baseline is already computed, defensible, and anchored in the client's actual data. You can imagine building Module 2 standalone; you cannot imagine it being as useful. The moat is the handshake, not the workspace. This is the same reason Figma's real advantage isn't the editor - it's the handoff to engineering, which integrates with code in ways Adobe can't replicate without rebuilding its entire stack.

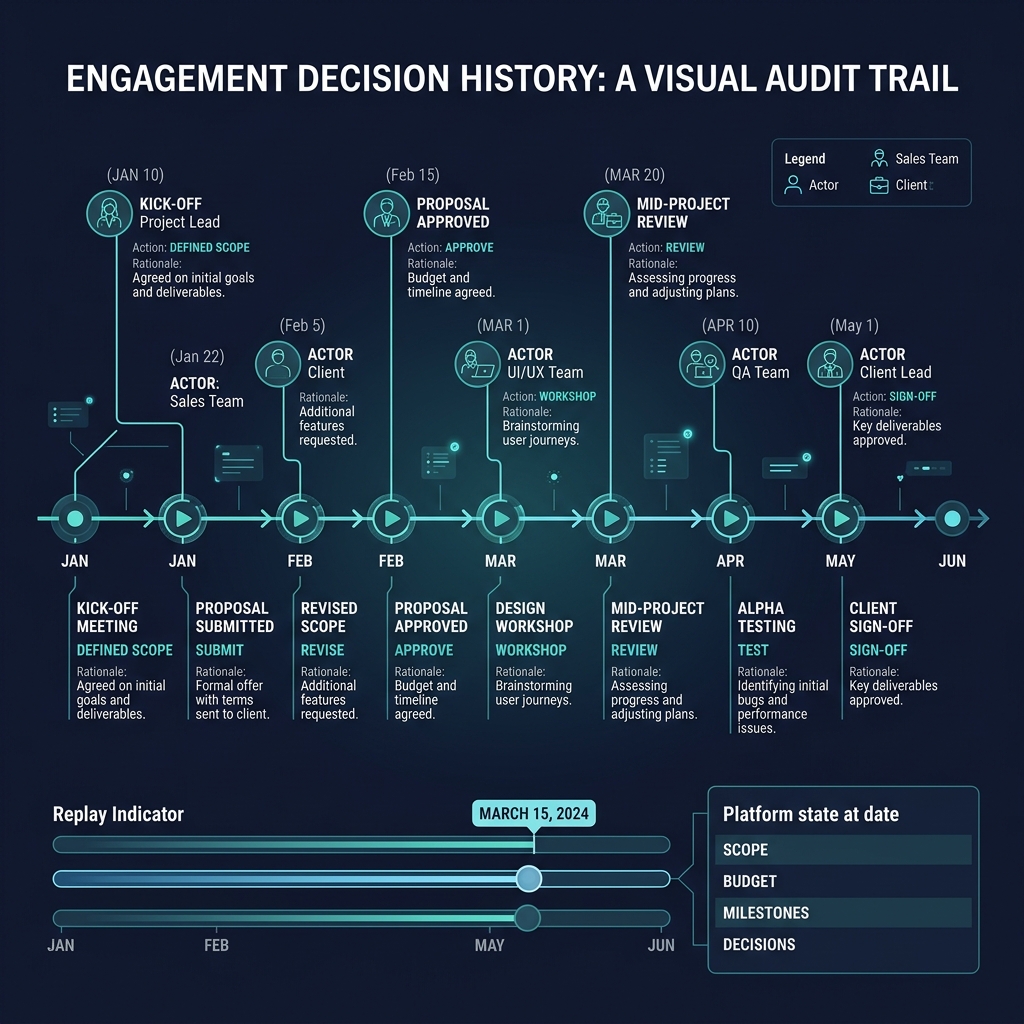

The third layer, and the one that actually compounds over time, is the data network effect. Every field visit, every sell-out data point, every distributor audit, every consumer interview feeds back into the confidence levels in the platform. When an engagement begins, outlet universe counts are AI-estimated - medium confidence, labelled as such. After three months of field research, they become field-verified - high confidence. After twelve months of DSR visit logs reconciling against them, they become client-owned - the highest confidence tier. The same cascade happens for visit frequencies, archetype penetration rates, territory market sizes, and distributor capability scores. A competitor would need to run the same engagement from scratch and spend twelve months collecting the same data to match what a live client has accumulated. That's not replicable without time, and time is the one thing you can't compress.

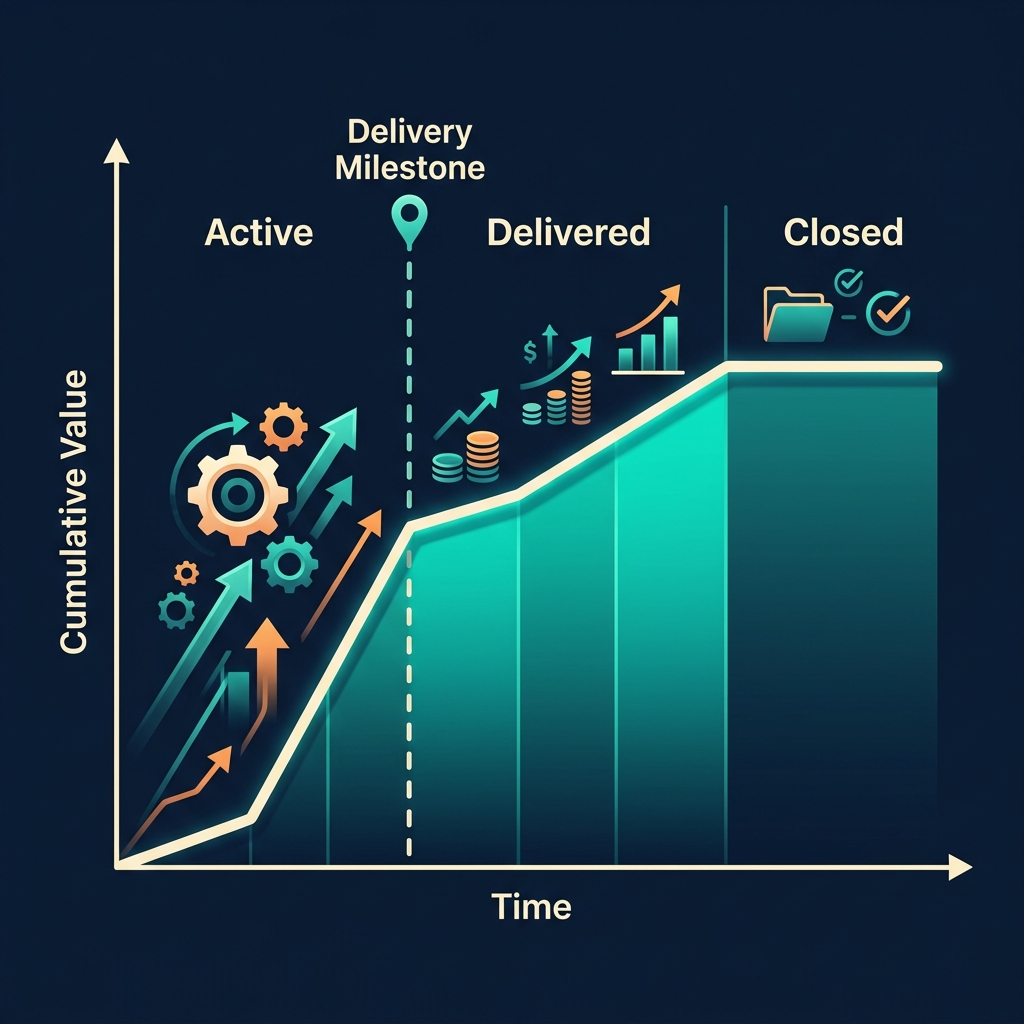

What this means for pricing and positioning

If the moat compounds over time, the pricing should too. A platform where the value grows month over month as data accumulates should not be sold on a flat subscription. It should be sold on an ascending ladder: cheaper in Year 1 when the model is still proving itself against AI-estimated baselines, more expensive in Year 2 when the client is making decisions based on field-verified data they couldn't reconstruct elsewhere, most expensive in Year 3 when the switching cost includes rebuilding an entire analytical foundation. Most SaaS pricing models do the opposite - they discount renewals to reduce churn. That's correct for horizontal tools where the value plateaus. It's wrong for vertical tools where the value accrues.

Positioning follows the same logic. The pitch in Year 1 is about methodology - "we know how to do this work better than you do." The pitch in Year 3 is about sunk cost - "your competitors can't see what you can see because they haven't been running the same engagement for three years." Both are true; they just emphasise different layers of the moat at different points in the customer lifecycle.

The risk worth protecting against

If the methodology is the first layer of the moat, the real risk isn't a developer cloning the repository. A developer cloning the repository gets a shell. The real risk is a consultant who runs engagements on the platform, absorbs the methodology, and takes it to a competitor - either by building something similar internally or by joining a rival. That's an NDA and engagement terms problem, not a technical one. The defensive posture is contractual and cultural, not cryptographic. Non-competes on senior partners. Clear IP language on methodology ownership. Internal training that makes the methodology feel proprietary and sophisticated rather than commoditisable. None of that is a platform feature; it's how the business is run.

There are technical protections worth adding before first live client engagement, but they're secondary: rate-limit the API per user so a malicious insider can't bulk-extract data; strip intermediate formula values from responses for non-SP roles so the calculation chain isn't visible on the wire; rotate the Gemini API key quarterly so if shell access is ever compromised, the cost exposure is bounded. All good hygiene. None of them are the moat.

The uncomfortable corollary

If the moat compounds through data, then you are worst-defended on day one. The first live engagement has no accumulated data, no field-verified archetypes, no twelve-month DSR log reconciling outlet counts. You are selling methodology and hope. The competitive risk is highest at exactly the moment the platform is most fragile. That's not a reason to wait - you can never accumulate the data without starting - but it is a reason to be deliberate about who the first three clients are. Pick clients who will generate the cleanest data, who will push the methodology hardest, and whose engagements will seed reference architectures for everyone who follows.

By the time the data network effect is obvious, the competitive question is answered. The work is making sure you're still in business when it shows up.