There are two failure modes that dominate commercial analytics, and both are so common that most organizations consider them the normal state of things.

The first is AI-only output. A model ingests public data, applies its algorithms, and produces confident numbers with no operational grounding. The model says the territory supports 14 DSRs. The model says the outlet universe is 8,200 stores. The model says the distributor margin should be 14.5%. Every number is precise. None of them have been verified against anything a human who operates in the territory would recognize. A Jakarta territory lead reads the output and says: the outlet universe is not 8,200 stores. We have 240 stores we list in and another 1,400 that are real but we don't visit. The rest is modeled from census data and it's mostly wrong. The AI model was built without the territory lead's knowledge; it cannot incorporate it.

The second is client-only input. A consulting team gathers inputs from the commercial organization, builds a strategy on those inputs, and presents recommendations grounded in the organization's operational knowledge. The recommendations are plausible. They're also parochial. The commercial team knows their territories. They don't know the territories they've never seen, can't benchmark against markets they don't operate in, and bring the blind spots of the incumbent view. What looks like a grounded strategy is a well-dressed version of "here's what we already think."

These two failure modes are usually discussed as if they were alternatives - you either trust the AI or you trust the operators. In practice, the answer is neither alone. It is a structured integration of both, and the integration is more difficult than either failure mode would suggest.

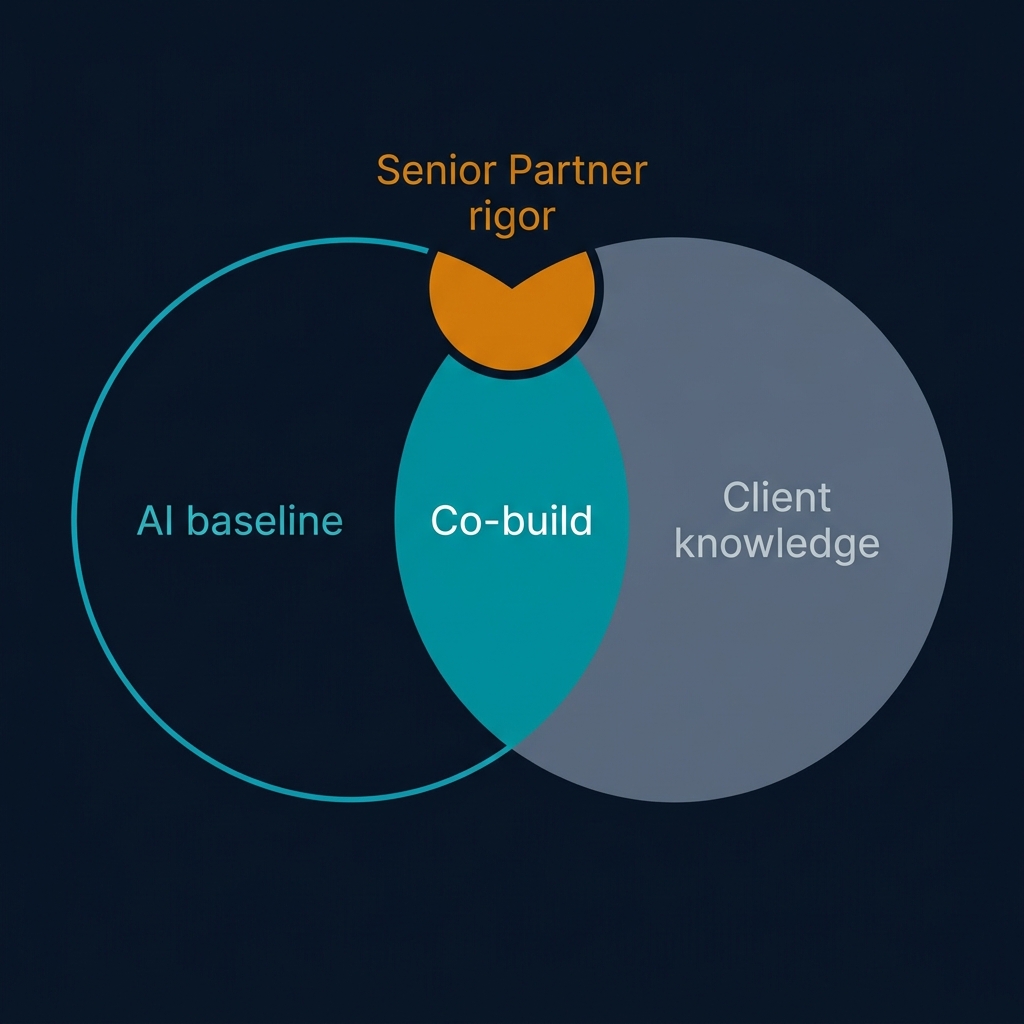

The principle I keep returning to is this: no significant conclusion should be AI-only or client-only. Every major output of a strategic analysis - outlet universe, territory boundary, distributor capability assessment, financial viability - should be co-built. AI provides the analytical baseline. Consulting expertise (the Senior Partner in our terminology) provides the rigor and the benchmark perspective. Client operational teams provide the ground truth. Field verification closes the confidence gap where the tenant chooses to invest in it.

This sounds obvious. It is not how most organizations actually work. Most organizations get an AI output, a consultant's interpretation, and an internal team's reaction, and then one of the three wins based on who is in the room with the most seniority. The co-build principle requires all three to be in the room, with a structured protocol for how they interact.

The specific mechanism that makes co-build work is the reconciliation record.

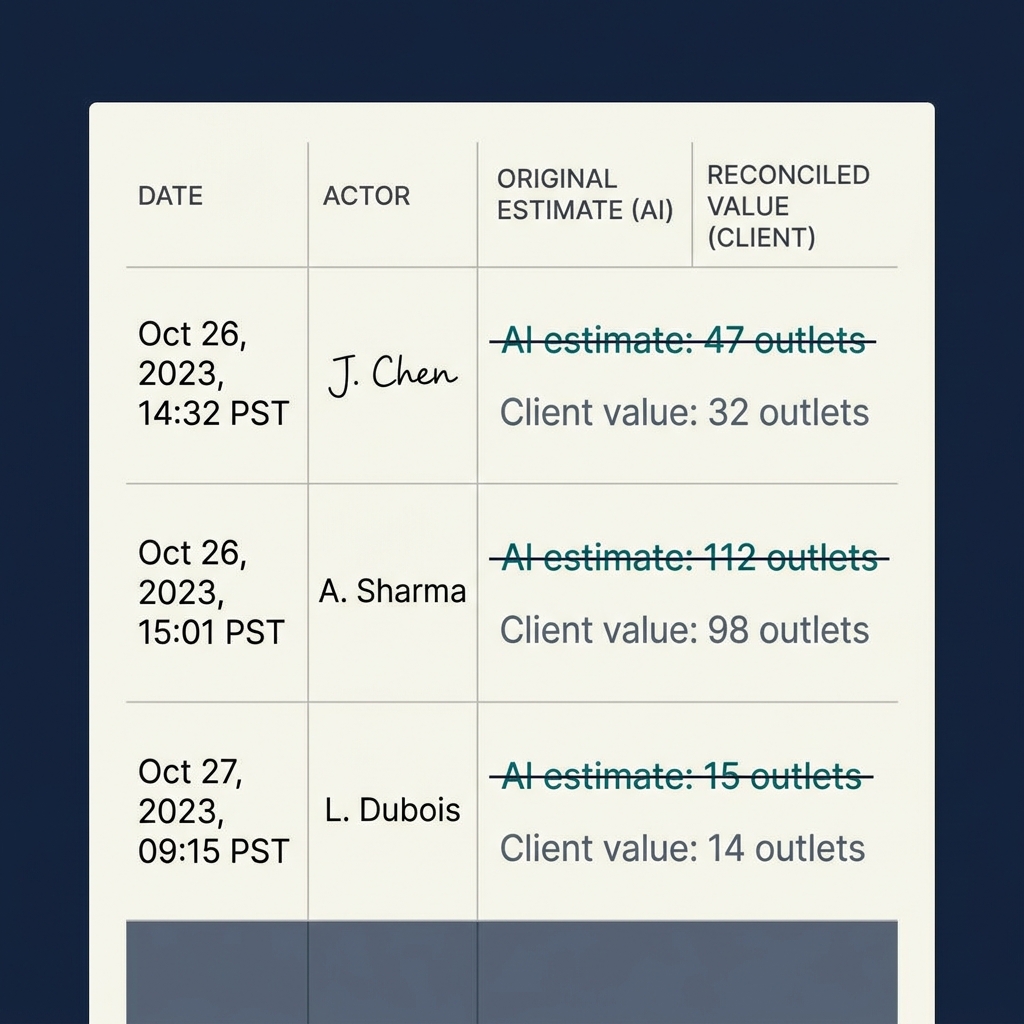

When the AI produces an estimate - the outlet universe in East Java is 8,200 stores - and the client disagrees - no, it's actually 4,600 active stores - the disagreement is not resolved silently. It is not resolved by the client's number replacing the AI's number with no trace. It is not resolved by the Senior Partner ruling in favor of one side.

It is resolved by writing a reconciliation record. The record captures: the original AI value (8,200), the new agreed value (4,600), the reason for the change ("AI model includes store census data that double-counts satellite locations of the same principal account"), the actor who made the change (the client's commercial director), and the timestamp. The new value is used going forward. The old value is preserved in the audit trail.

This is not bureaucracy. It is the mechanism by which disagreement becomes evidence. Over time, the system accumulates reconciliation records across multiple engagements. Patterns emerge. The AI consistently overestimates outlet counts in markets with heavy principal-account structures. The AI consistently underestimates distributor working capital requirements in challenger phases. The AI consistently overestimates DSR productivity in dispersed geographies. Each pattern becomes an input to improving the AI baseline.

Without reconciliation records, none of this learning happens. The disagreement is resolved in conversation, forgotten, and the AI makes the same error on the next engagement. Reconciliation records are how the system gets smarter. Silent overrides are how it stays stupid.

There is a deeper principle here about what analytical integrity means, and it's worth naming.

Most organizations implicitly treat analytical outputs as authoritative or not authoritative - the model's answer is correct, or it isn't. The co-build principle treats analytical outputs as hypotheses to be validated or revised. The model's answer is the starting point. The client's answer is the validating signal. The Senior Partner's judgment is what distinguishes substantive validation from stakeholder preference. The reconciliation record is the evidence trail that shows the validation actually happened.

This framing changes how you run engagements. The AI doesn't "deliver" outputs - it proposes them. The client doesn't "approve" outputs - they validate or challenge them. The consultant doesn't "resolve" disagreements - they facilitate the reconciliation. The workflow is designed around the expectation that 20–40% of AI estimates will be revised by client input, and the system is built to capture those revisions structurally.

Three design principles follow from this.

First, the AI must be capable of presenting its reasoning, not just its answer. When the AI says "outlet universe is 8,200 stores," it must also be able to say "based on census data from source X, adjusted for principal-account structure using method Y, with confidence tier Z." A black-box AI output is not validatable. A glass-box output, with its reasoning exposed, is. Co-build requires glass-box AI by design.

Second, the client input must be captured structurally, not as commentary. If the client says "that number is wrong," the system must prompt them for the new number, the reason, and the source. Free-text commentary that disappears into meeting notes is not reconciliation. It is a conversation that pretended to be one.

Third, the reconciliation record must be immutable. Once written, it cannot be edited. Corrections are new records that reference the original. This is the same architecture pattern as an append-only event log, applied to the specific domain of input reconciliation. It is the mechanism by which the audit trail remains trustworthy over long periods.

One last observation about co-build, because I've seen it misunderstood more than most ideas in this space.

Co-build is not consensus-building. It is not a process where the AI, the client, and the consultant all have to agree before an output is accepted. Disagreement is normal and expected. What matters is that the disagreement is resolved visibly, with the reasoning captured, and that the system remembers the resolution.

In practice, a Senior Partner-led engagement might run with 15–25% of the AI's outputs revised by client input, another 5–10% revised by Senior Partner judgment after reviewing both sides, and the remaining 65–80% accepted as the AI proposed. The mix of acceptance and revision isn't what makes the process trustworthy. What makes it trustworthy is that you can trace, for any given output, which path it traveled - AI accepted, AI revised by client, AI revised by Senior Partner, original AI estimate marked as withdrawn - and why.

That traceability is what turns a strategy deliverable into something the Regional MD can defend to their board. Without it, the deliverable is just a stack of numbers, some of which are right.

A note on the role of the Senior Partner in the co-build architecture, because this is where most attempts at the model break down.

The Senior Partner - or whatever the organization calls its senior strategy facilitator - has a specific job in the co-build process that's easy to miss and impossible to skip. They are not the arbiter of truth. They are not the final say on what the correct number is. Their role is to facilitate the reconciliation between AI output and client input in a way that produces better answers than either would produce alone.

This is harder than it sounds. The Senior Partner has their own views on what the right answer should be, built from years of experience in the category or market. They have to subordinate those views to the process. When the AI says 8,200 and the client says 4,600, the Senior Partner's first job is not to decide who's right; it's to ensure that the reasons for the disagreement are surfaced and documented. Only after the reasons are clear does the Senior Partner contribute their own judgment, and that judgment typically looks like: "the AI's number over-counts because of how the census data treats principal accounts; the client's number under-counts because it excludes inactive but listed outlets; the right working number is probably somewhere around 5,400, and we should plan a field verification pass to confirm."

That kind of third-party judgment - informed by experience, skeptical of both inputs, pointing toward a resolution that neither party proposed - is what the Senior Partner adds. Without it, reconciliation collapses into a negotiation between AI overconfidence and client parochialism, with neither party willing to concede and the output stalled.

Three anti-patterns to watch for, each of which defeats the co-build architecture in a specific way.

The AI-deference anti-pattern. The client accepts whatever the AI produces because they assume the AI has more data than they do, or because challenging the AI feels confrontational, or because the deadline is tight and accepting is faster than engaging. The output is accepted without reconciliation records because there were no disagreements to reconcile. The strategy team feels productive. The final deliverable reflects AI output unmediated by operational knowledge. Quality suffers without anyone noticing.

The client-override anti-pattern. The client rejects AI output reflexively because "the AI doesn't understand our market" or "that's not how it works here." Every AI number gets replaced with a client number. The system does accumulate reconciliation records, but they're all one-directional, and the records aren't real reconciliations - they're overrides. The AI contribution is effectively zero. The strategy is built entirely on client input, with the same parochial blind spots that would have produced it without any AI involvement.

The Senior Partner dictation anti-pattern. The Senior Partner, frustrated with the slowness of structured reconciliation, starts making decisions directly. "The number is 5,200. Move on." The reconciliation record captures the decision but not the reasoning, because the reasoning was in the Senior Partner's head and never got articulated. Over time, the strategy becomes the Senior Partner's view, with AI and client input as theater rather than substance. The output is as good as the Senior Partner's judgment, and no better.

Each anti-pattern produces output that looks co-built but isn't. The process metrics may look correct - there are AI outputs, client comments, Senior Partner sign-offs, reconciliation records in the system - but the substance of co-build has been undermined. The output has one voice dominating, and the checks that were supposed to catch that have been subverted.

Detecting these anti-patterns requires looking at the reconciliation records, not just counting them. If 90% of records show client overrides with no AI pushback, something is wrong. If 90% show AI output accepted unchanged, something different is wrong. If the reasoning captured in the records is thin - "client knows better" or "AI is more accurate" without specifics - the process is going through the motions without doing the work.

One more observation about the co-build architecture, which goes to why it matters beyond this specific product category.

Organizations are increasingly facing a situation where AI tools can produce outputs that are simultaneously more detailed than human analysts could produce in the available time, and less grounded in operational reality than human operators would produce. The temptation is to pick one side - either "trust the AI" or "override with human judgment." Both defaults produce worse outputs than the integration.

Co-build, with its structured reconciliation and its preservation of both AI and human input, is a pattern that extends beyond distributor strategy to any context where AI analytical capability meets operational ground truth. Financial planning, supply chain optimization, marketing mix modeling, customer segmentation - each of these domains has an AI-analytical layer and an operational-knowledge layer, and the output quality depends on integrating them rather than choosing one.

The pattern is portable. The specific architecture - AI baseline, Senior Partner facilitation, client input, reconciliation records, immutable audit trail - adapts to different domains with the same fundamental structure. Organizations that learn to operate this way in one domain can apply the learning to others. That's a capability worth developing, not just a product feature.